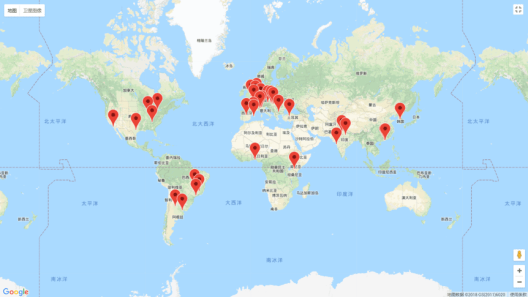

Summary: We used API (Application Programming Interface) as the source to extract data from the USGS database in order to analyze the last 50 years and estimate the frequency of earthquakes in Southeast Asia. With the help of Python, the extracted data was exported into CSV file for categorizing different parameters such as by country, magnitude and year.

Introduction

Application programming interface (API) is commonly used to extract data from a remote website server. In layman term, API is used to retrieve data or information from another program. There are several websites such as Facebook, the USGS, Twitter, Reddit, which offer web-based API helping get information or data.

In order to retrieve data, we will send requests to the host web server where you want to extract the data and tweak parameters like URL in the module to connect to the server. Different websites have different requests format and can easily be accessed through the host’s website.

In our module, we will be extracting the data of earthquakes that hit Southeast Asia in the last 50 years from the web server of USGS using API.

One of the most frequent natural disasters on planet earth is earthquake. The sharp unleash of energy from Earth’s lithosphere generates seismic waves which lead to sudden shaking of the surface of the Earth. This natural disaster has led to the death of thousands of millions of people all around the world.

The strength of earthquakes is measured through Richter magnitude scale or just magnitude. The magnitude is the scale which ranges from 1-10.

The most highly sensitive region in the world prone to the earthquakes are Southeast Asian countries. To find the trend in the region, we extracted 50 years data from USGS by using API and convert the numbers into CSV file through Python coding for a comprehensive understanding of earthquakes situation in Southeast Asia.